Social Media Deliberately Engineered for Addiction

Overview

The claim that major social media platforms were deliberately designed to exploit psychological vulnerabilities and maximize addictive engagement is not a speculative conspiracy theory but a confirmed reality acknowledged by multiple former executives and designers of these very platforms. Unlike most entries in conspiracy theory literature, this one achieved its “confirmed” status through direct admissions from the people who built the systems in question.

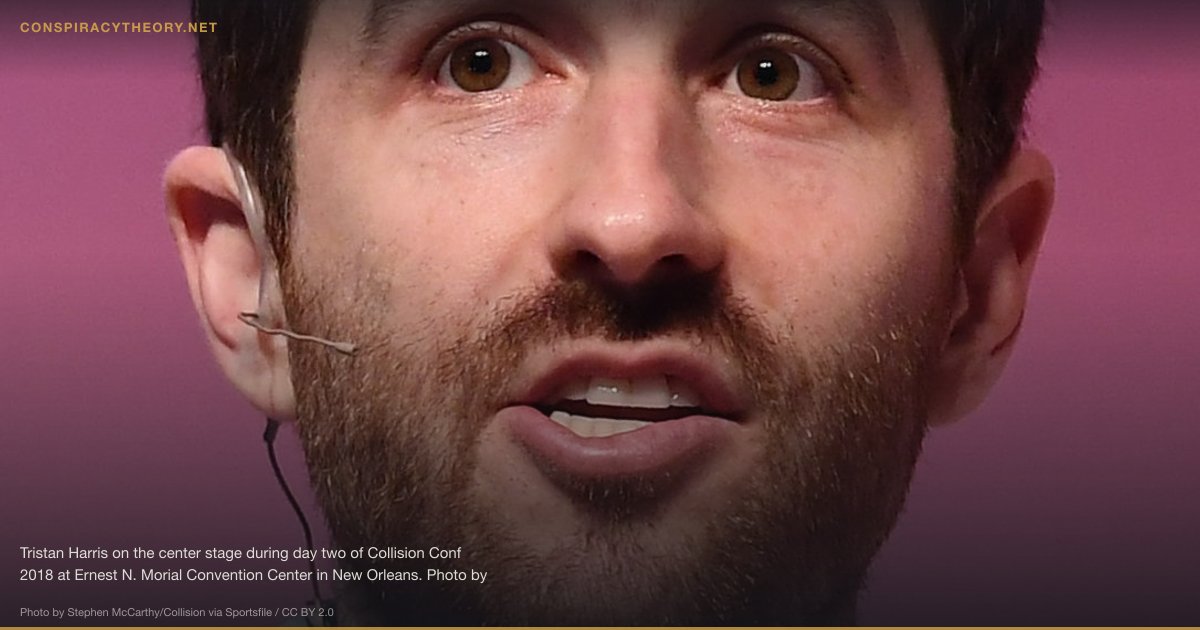

Beginning around 2017, a series of former Silicon Valley insiders broke ranks to publicly describe how platforms including Facebook, Instagram, Twitter, YouTube, and Snapchat were consciously engineered using behavioral psychology techniques to create compulsive usage patterns. These admissions described the deliberate deployment of variable reward schedules, social validation feedback loops, and attention-capture mechanisms drawn from academic research on addiction and persuasion. The disclosures were not the result of external investigation or leaked documents, but of voluntary public statements by individuals including former Facebook president Sean Parker, former VP of Growth Chamath Palihapitiya, and former Google design ethicist Tristan Harris.

The significance of these revelations extends beyond the technology industry into public health, democratic governance, and the rights of minors. Research has subsequently linked heavy social media use to increased rates of depression, anxiety, and self-harm among adolescents, and the deliberate design of addictive features has become a central issue in regulatory debates across multiple countries.

Origins & History

The intellectual foundations of addictive social media design trace to Stanford University’s Persuasive Technology Lab, founded by B.J. Fogg in 1998. Fogg developed a behavioral model positing that behavior occurs when motivation, ability, and a trigger converge simultaneously. His research on “captology” (computers as persuasive technology) influenced a generation of Silicon Valley product designers, many of whom attended his Stanford courses before going on to leadership roles at major technology companies. Fogg’s students included Mike Krieger, co-founder of Instagram, and Nir Eyal, who later wrote the influential book Hooked: How to Build Habit-Forming Products (2014), essentially a manual for creating addictive digital products.

The mechanics of addictive design borrowed heavily from the gambling industry and behavioral psychology research on variable reinforcement schedules, first described by B.F. Skinner in the mid-20th century. Skinner demonstrated that pigeons and rats responded most compulsively to rewards delivered at unpredictable intervals rather than on fixed schedules. Social media platforms replicated this principle through features such as the pull-to-refresh gesture (mimicking a slot machine lever), notification systems calibrated to deliver social validation unpredictably, and algorithmic feeds that intersperse engaging content at variable intervals.

Facebook’s “Like” button, introduced in 2009, became perhaps the most consequential implementation of variable reinforcement in digital history. Designed by Leah Pearlman and Justin Rosenstein, the button transformed social posting from an act of self-expression into a gamified pursuit of measurable social approval. Rosenstein later described the Like button as having become “bright dings of pseudo-pleasure” and installed parental controls on his own phone to limit his Facebook usage.

In 2006, Aza Raskin invented the infinite scroll while working at Humanized, a user interface company. The feature, which loads new content continuously as a user scrolls, eliminated the natural stopping points that pagination had previously provided. Raskin later expressed profound regret, estimating that his invention caused approximately 200,000 additional human lifetimes per day to be consumed by scrolling. He became a vocal critic of addictive design and co-founded the Center for Humane Technology.

The dam broke publicly in late 2017. In November, Sean Parker told an Axios audience that Facebook was designed to exploit “a vulnerability in human psychology” and that the platform’s architects, including Mark Zuckerberg, understood this from the beginning. “The thought process was: ‘How do we consume as much of your time and conscious attention as possible?’” Parker said. “And that means that we need to sort of give you a little dopamine hit every once in a while, because someone liked or commented on a photo or a post or whatever.”

The following month, Chamath Palihapitiya, who served as Facebook’s VP of Growth from 2007 to 2011, told a Stanford audience: “The short-term, dopamine-driven feedback loops that we have created are destroying how society works.” He said he felt “tremendous guilt” about the company he helped build and that his children were “not allowed to use that shit.”

Key Claims

- Social media platforms including Facebook, Instagram, TikTok, YouTube, Twitter, and Snapchat were deliberately designed using behavioral psychology to create compulsive usage patterns

- Features such as likes, infinite scroll, push notifications, autoplay, and streaks function as variable reinforcement mechanisms borrowed from gambling addiction research

- B.J. Fogg’s Persuasive Technology Lab at Stanford provided the intellectual framework and trained many of the designers who implemented these systems

- Platform companies employ teams of psychologists and behavioral scientists specifically to increase “engagement,” a euphemism for addictive usage

- Internal research at these companies documented harm to users, particularly adolescents, but was suppressed in favor of continued growth

- The attention economy business model creates a structural incentive to maximize time-on-platform regardless of user well-being

- Algorithmic feeds are tuned to prioritize emotionally provocative content because anger and outrage generate more engagement than neutral or positive content

- The designers of these systems have publicly acknowledged the addictive properties were intentional, not accidental

Evidence

The evidence for deliberate addictive design in social media is exceptionally strong, comprising both direct testimony from platform insiders and extensive academic research.

The most compelling evidence comes from the designers and executives themselves. Sean Parker’s 2017 admission that Facebook was built to exploit psychological vulnerabilities was not a whistleblower revelation but a casual confirmation at a public event. Chamath Palihapitiya’s Stanford remarks expressed “tremendous guilt” about the feedback loops Facebook created. Justin Rosenstein, who helped design Facebook’s Like button, described installing parental controls on his own phone and compared social media to heroin. Tristan Harris, a former Google design ethicist, left the company to found the Center for Humane Technology after his internal presentation, “A Call to Minimize Distraction & Respect Users’ Attention,” was widely circulated but not acted upon.

In September 2021, Frances Haugen, a former Facebook product manager, leaked thousands of internal documents to the Wall Street Journal, which published them as “The Facebook Files.” These documents revealed that Facebook’s own research had found that Instagram was “toxic” for a significant percentage of teenage girls, exacerbating body image issues, anxiety, and suicidal ideation. The documents showed that the company was aware of this harm but chose not to implement meaningful changes because they would reduce engagement metrics.

Academic research has corroborated these claims extensively. A 2017 study by the Royal Society for Public Health in the UK found that Instagram was the most damaging social media platform for young people’s mental health. Jean Twenge’s research at San Diego State University documented a sharp increase in depression, self-harm, and suicide among American teenagers beginning around 2012, which she correlated with the widespread adoption of smartphones and social media. Jonathan Haidt’s work, particularly in The Anxious Generation (2024), synthesized evidence linking social media use to the adolescent mental health crisis.

Nir Eyal’s book Hooked: How to Build Habit-Forming Products (2014) serves as something of a confession document, openly describing the four-step “Hook Model” (trigger, action, variable reward, investment) used by technology companies to create habitual product usage. While Eyal framed this as ethically neutral, critics noted that the techniques described were fundamentally the same as those used by casinos and drug dealers.

Debunking / Verification

This theory carries a “confirmed” status because the primary claims have been verified through multiple independent channels. The confirmation comes not from external investigation alone but from direct admissions by the people who designed and managed these systems.

However, some nuances deserve attention. Not all social media harm is the result of intentional design; some negative effects arise from emergent properties of complex systems that designers did not fully anticipate. The degree of intentionality varies across companies and time periods. Early Facebook, for example, may have implemented addictive features more through growth-oriented trial and error than through a deliberate plan to cause addiction.

Additionally, the causal relationship between social media use and mental health outcomes, while supported by substantial correlational evidence, remains debated among researchers regarding magnitude and mechanism. Some scholars, including Andrew Przybylski at the Oxford Internet Institute, have argued that the negative effects of social media on adolescent well-being, while real, are smaller than those from other common factors like sleep deprivation or bullying.

What is not disputed is that platform companies deployed persuasive design techniques with full knowledge of their psychologically compelling properties, that they measured and optimized for engagement metrics they knew correlated with compulsive usage, and that they continued to do so after internal and external evidence documented harmful effects.

Cultural Impact

The confirmation that social media was deliberately engineered for addiction has had profound consequences across culture, politics, and law. The 2020 Netflix documentary The Social Dilemma, directed by Jeff Orlowski, brought these revelations to a mass audience by featuring Harris, Raskin, and other former tech insiders describing the manipulation techniques they had helped build. The film was watched by an estimated 100 million households worldwide and was credited with prompting millions of users to delete social media accounts or restrict their usage.

The issue has become central to political discourse in multiple countries. In the United States, bipartisan concern about social media’s effects on children has been one of the few areas of legislative agreement in an otherwise polarized Congress. Multiple state attorneys general have filed lawsuits against Meta (Facebook’s parent company) alleging that the company knowingly designed features to addict minors. The European Union’s Digital Services Act and the UK’s Online Safety Act both contain provisions addressing algorithmic amplification and addictive design.

The revelations have also sparked a counter-movement in technology design. The “humane technology” movement, led by Harris and Raskin’s Center for Humane Technology, advocates for design practices that respect users’ time and psychological well-being. Apple introduced Screen Time features in iOS 12 (2018), and Google added Digital Wellbeing tools to Android, effectively acknowledging that the products running on their platforms could be harmful. The concept of “digital wellness” has become a recognized field of study and practice.

The broader cultural conversation about attention, addiction, and technology that these revelations catalyzed represents one of the most significant shifts in how societies understand the relationship between humans and digital technology.

Timeline

- 1998 — B.J. Fogg founds the Persuasive Technology Lab at Stanford University

- 2006 — Aza Raskin invents the infinite scroll at Humanized

- 2009 — Facebook introduces the Like button, designed by Leah Pearlman and Justin Rosenstein

- 2013 — Tristan Harris circulates internal presentation at Google titled “A Call to Minimize Distraction & Respect Users’ Attention”

- 2014 — Nir Eyal publishes Hooked: How to Build Habit-Forming Products

- 2016 — Harris leaves Google and begins publicly advocating for humane technology

- November 2017 — Sean Parker publicly admits Facebook was designed to exploit psychological vulnerability

- December 2017 — Chamath Palihapitiya expresses “tremendous guilt” about Facebook’s addictive feedback loops at Stanford

- 2018 — Harris and Raskin co-found the Center for Humane Technology; Apple introduces Screen Time

- 2020 — The Social Dilemma documentary reaches an estimated 100 million households on Netflix

- September 2021 — Frances Haugen leaks internal Facebook documents to the Wall Street Journal (“The Facebook Files”)

- October 2021 — Haugen testifies before the US Senate Commerce Committee on Facebook’s harmful effects on children

- 2022 — European Union passes the Digital Services Act with provisions on algorithmic transparency

- 2023 — Multiple US state attorneys general file lawsuits against Meta over addictive design targeting minors; UK passes Online Safety Act

- 2024 — Jonathan Haidt publishes The Anxious Generation, synthesizing evidence on social media and adolescent mental health

Sources & Further Reading

- Harris, Tristan. “A Call to Minimize Distraction & Respect Users’ Attention.” Internal Google presentation, 2013. Available through the Center for Humane Technology.

- Eyal, Nir. Hooked: How to Build Habit-Forming Products. Portfolio/Penguin, 2014.

- Twenge, Jean M. iGen: Why Today’s Super-Connected Kids Are Growing Up Less Rebellious, More Tolerant, Less Happy. Atria Books, 2017.

- Haidt, Jonathan. The Anxious Generation: How the Great Rewiring of Childhood Is Causing an Epidemic of Mental Illness. Penguin Press, 2024.

- Orlowski, Jeff, dir. The Social Dilemma. Netflix, 2020.

- Horwitz, Jeff et al. “The Facebook Files.” Wall Street Journal, September-October 2021.

- Zuboff, Shoshana. The Age of Surveillance Capitalism. PublicAffairs, 2019.

- Royal Society for Public Health. “#StatusOfMind: Social Media and Young People’s Mental Health and Wellbeing.” RSPH, 2017.

Frequently Asked Questions

Did Facebook executives actually admit to designing their platform to be addictive?

What is the 'infinite scroll' and who invented it?

Are there laws regulating addictive social media design?

Infographic

Share this visual summary. Right-click to save.